Digital Gallery

Browse

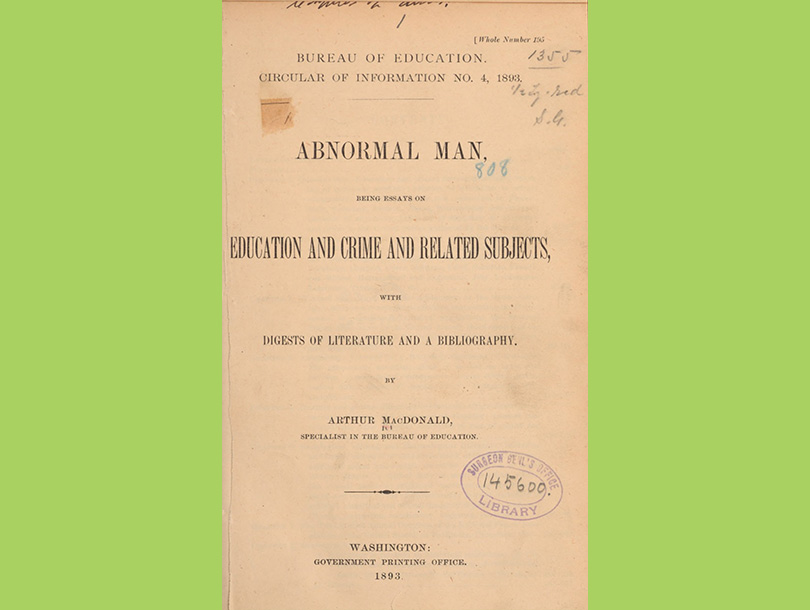

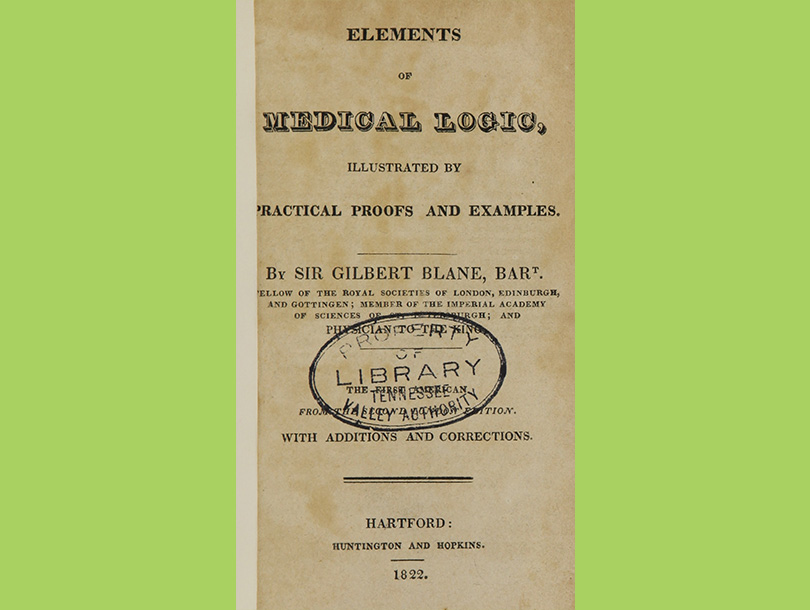

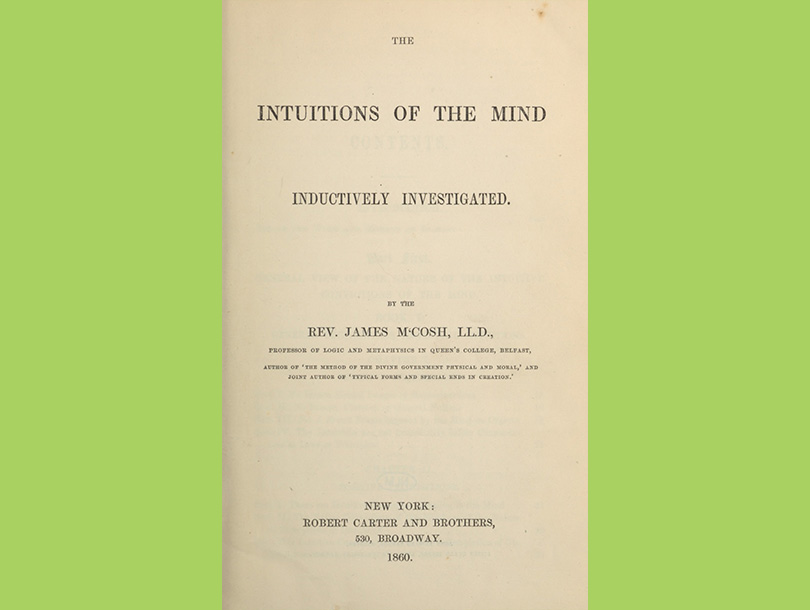

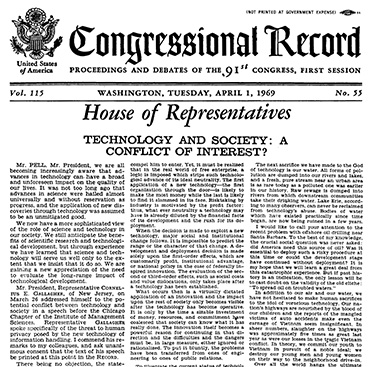

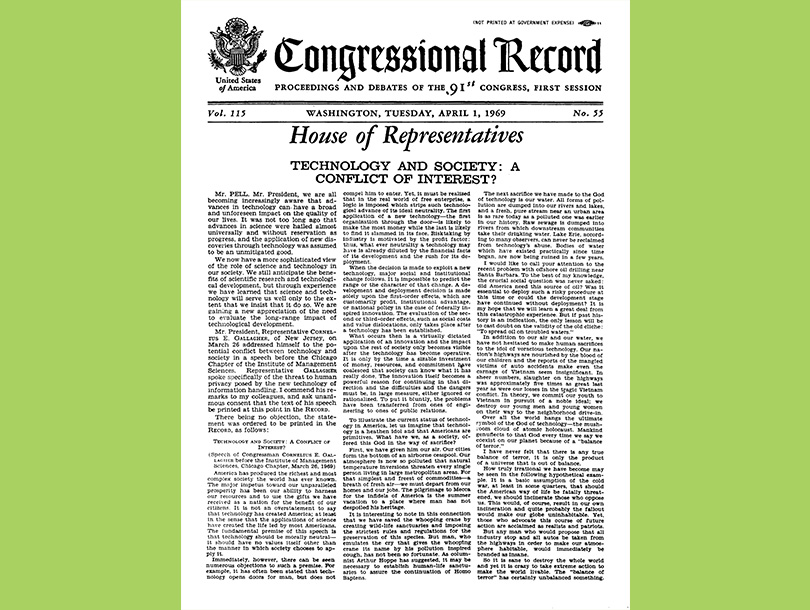

We have discarded many disproven historical theories that used faulty science to buttress inaccurate ideas. Still, the possibility that flawed methodology, unsound reasoning, and bad information could taint scientific study is ever-present. AI researchers face the challenge of avoiding bad inputs and damaging impacts as they develop technologies that teach machines to learn and do automatic processes. Explore this curated selection of items from the NLM collection related to debunked historical ideas about personal attributes like intelligence and character, historical reasoning, and efforts to ensure accuracy and reliability, mitigate bias, and prevent harmful consequences in late 20th century AI research.